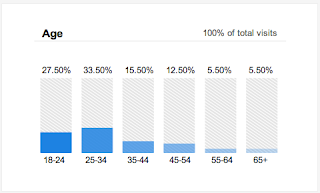

Either I am severely mistaken, or there is something wrong with Google's machine learning inference software. You are either much younger, or young at heart.

And you are of ambiguous gender, too. ;-)

The reason for this classification? Because you spend time reading about technology. And everyone knows that men, not women, read about technology, right?

On a more serious note, please take the time to read Evgeny Morozov's The Real Privacy Problem. Here are a couple of juicy paragraphs to whet your appetite, but you should read the whole thing.

In case after case, Simitis argued, we stood to lose. Instead of getting more context for decisions, we would get less; instead of seeing the logic driving our bureaucratic systems and making that logic more accurate and less Kafkaesque, we would get more confusion because decision making was becoming automated and no one knew how exactly the algorithms worked. We would perceive a murkier picture of what makes our social institutions work; despite the promise of greater personalization and empowerment, the interactive systems would provide only an illusion of more participation. As a result, “interactive systems … suggest individual activity where in fact no more than stereotyped reactions occur.”

If you think Simitis was describing a future that never came to pass, consider a recent paper on the transparency of automated prediction systems by Tal Zarsky, one of the world’s leading experts on the politics and ethics of data mining. He notes that “data mining might point to individuals and events, indicating elevated risk, without telling us why they were selected.” As it happens, the degree of interpretability is one of the most consequential policy decisions to be made in designing data-mining systems. Zarsky sees vast implications for democracy here:

A non-interpretable process might follow from a data-mining analysis which is not explainable in human language. Here, the software makes its selection decisions based upon multiple variables (even thousands) … It would be difficult for the government to provide a detailed response when asked why an individual was singled out to receive differentiated treatment by an automated recommendation system. The most the government could say is that this is what the algorithm found based on previous cases.

Someone asked when I was going to post the backlog of sewing and knitting projects. Stay tuned, because I do have some stuff to share for our girly sides. But first, I want to take a statistical detour about how my local public middle school, a school that is above average on an absolute scale and compared to schools with similar demographics, was labeled a "failing" school by NCLB (No Child Left Behind).

We are in "Program Improvement" status for the second year in a row because of what Bad Dad calls a statistical fluke. Actually, it is not a fluke. It is an entirely predictable misclassification by a bad statistical algorithm. I would like the lawmakers who passed NCLB to take a statistics test and publicly post their scores on their congressional websites. Would that be too much to ask for in the name of democracy?

Could someone come up with a blog badge for blogs of indeterminate gender?

When I first heard that you could look at how Google classifies you, I went and checked, and Google thinks I am male. It gets my age about right, but thought I was interested in clocks for some reason.

ReplyDeleteThanks for the reading recommendation. I'll definitely check it out. I am a database geek and love data mining.... but I am increasingly disturbed by how algorithms control the information I see. I am actively working to break out of that.

For your database work, do you care about collisions and timing? That could be why they think you care about clocks.

DeleteI'd be willing to bet money that the vast majority in congress have little understanding of statistical analysis. Also, Google thinks I am a much younger man. We could have predicted this result by doing an analysis of the expectations of the programmers (age,gender, education).

ReplyDeleteAs far as I can tell, NCLB was designed from the start to make all public schools 'fail', so that they could be replaced by for-profit charters. It was one of the first beachheads for corporate education reform. According to NCLB, all students in all schools have to achieve 'proficient' rating in whatever test is the current flavour of the day, by next year. This was obviously nonsense but Congress passed it nonetheless. Arne Duncan has retreated from this with a variety of dodges to evade admitting NCLB is unworkable, still the corporate reform juggernaut rolls on.

ReplyDeleteStatistics and algorithms can be corrected, but the corrections can't fix politics..

http://www.edweek.org/ew/issues/no-child-left-behind/

I'm reading Reign of Error and completely agree with both of you. It's demoralizing to the professionals trying to teach in this gotcha environment.

DeleteI don't keep google cookies, so they don't really know who I am :-). But alas I'm sure they think I'm male, because I read lots of techno-stuff. Sigh. Tragically wrong assumption, and worse probably circular reinforcement.

ReplyDeleteConclusion: use duck-duck-go...

I should create a blog badge:

ReplyDelete"I had a Bayesian sex-change operation!"